AI Twin

A local-first Slack agent that intercepts @mentions, replies in your voice via Claude Code, and continuously distils your communication patterns and facts into a personal knowledge base — zero message history in the cloud.

The Problem with AI Agents

Most AI agents are powerful in isolation but awkward in practice — they live in a separate tab, demand context-switching, and forget everything between sessions. The harder problem isn't capability; it's integration. An agent that learns how you write, understands your codebase, and shows up where you already work is orders of magnitude more useful than one you have to visit.

AI Twin embeds an agent directly into Slack — where async work already happens. It intercepts @mentions, replies in your voice, handles PR reviews and Q&A, and continuously distils your communication patterns into a growing personal knowledge base. Crucially, every message stays on your machine.

Architecture

The system has two independently deployed components:

Slack Events ──► slack-dispatcher (Cloudflare Workers)

│

│ WebSocket (OTP-paired, capability token)

▼

twin-runtime (Tauri desktop app, user's machine)

│

├─ Claude Code CLI (local subprocess, stream-json)

├─ Filesystem (raw/, patterns/, notes/, facts/)

└─ SQLite cache (threads / messages / settings)

slack-dispatcher is a stateless Cloudflare Workers service that handles Slack OAuth, event routing, OTP pairing, and chat.postMessage. It holds WebSocket connections open via Durable Objects — the only way to maintain stateful connections in a serverless environment. No message history is stored server-side.

twin-runtime is the local brain. It maintains the WebSocket connection to the dispatcher, orchestrates task execution, calls Claude Code as a local subprocess, manages memory distillation, and drives the desktop UI. All chat history and user knowledge lives here.

Tech Stack

- Monorepo: pnpm workspaces —

core(pure TS business logic) +desktop(Tauri app) - Core: TypeScript / ES2022 · Zod v4 · Vitest

- Desktop: Tauri 2 · React 19 · Vite · Tailwind · shadcn/Radix

- Cloud: Cloudflare Workers · Hono · Durable Objects · KV

- Slack: slack-edge SDK · Block Kit · OAuth v2 · Events API

- Storage: SQLite (tauri-plugin-sql) · Filesystem (markdown as single source of truth)

- Credentials: OS Keychain (keyring crate) with file fallback

- CI/CD: GitHub Actions — universal Apple binary · notarization · Tauri updater signing

Key Design Decisions

Layered auth. The system has four independent trust chains, each solving a different problem. Slack → Worker traffic is verified via HMAC-SHA256 signature (slack-edge SDK, 5-minute replay window). Workspace bot tokens are resolved per-request from KV using the incoming team_id. The most interesting layer is runtime pairing: the desktop client exchanges a RUNTIME_SECRET bearer token for a one-time OTP code, which the user enters in Slack via /connect — binding their Slack identity to the runtime's runtimeId. The WebSocket upgrade then consumes this as a capability token, no further shared secret needed. Reconnects reuse the persisted binding; /disconnect revokes it instantly.

Filesystem as truth. User knowledge — communication patterns, internal facts, notes — lives in human-editable markdown files. SQLite and any future embeddings index are disposable derived caches. The knowledge base can be git-versioned, diffed, and synced across machines without any proprietary format.

Distillation over retrieval. Rather than vector search over raw logs, the system periodically distils raw interaction evidence into structured persona files (patterns/, facts/). These are injected directly into the system prompt for the next task — readable by the user, auditable, and incrementally correctable.

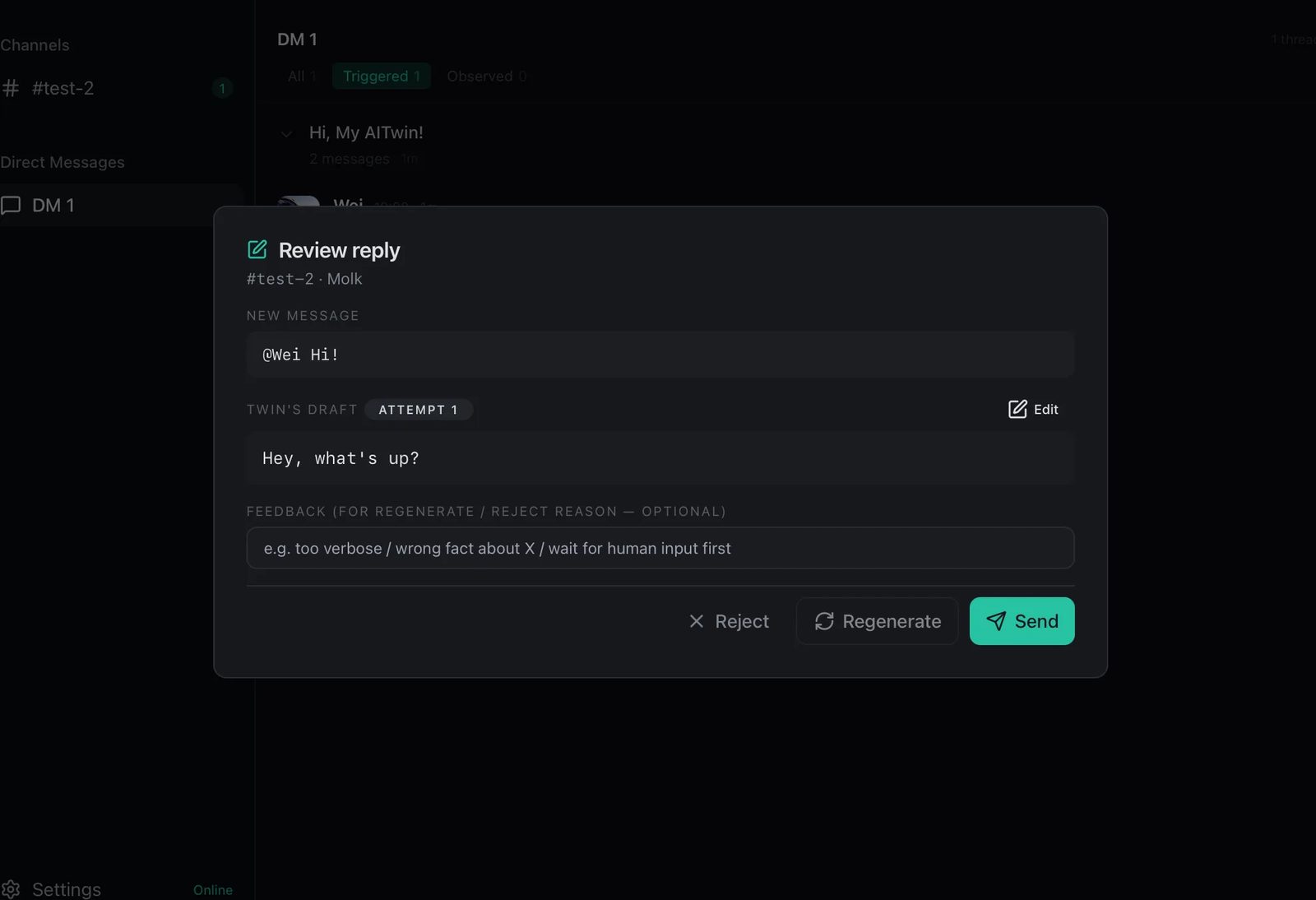

Trust Ramp. Three modes share one human-in-the-loop modal: Guided (every reply reviewed before sending), Auto + Escalation (runs autonomously, surfaces <escalate> tags when uncertain), and Pure Auto (fully hands-free). The escalation path re-injects reviewer feedback and reruns the task, up to three times.

Stateless dispatcher. The cloud component routes events but stores nothing. This isn't just a privacy decision — it also eliminates the cost and compliance surface of storing Slack message history on a server.

One @mention, full loop:

- Dispatcher pushes

DispatchPayload→ written toraw/threads/+ SQLite PromptComposerassembles system prompt from distilled persona files, wraps thread history- Executor spawns

claude --print --add-dir <thread-dir>, streams events - After 10s, sends a "Working on it…" buffer reply (auto mode)

- On completion, slices response into 4000-char chunks back to Slack; guided mode surfaces the review modal;

<escalate>re-injects feedback and reruns - Full run — system prompt, wrapped message, event stream, result, token cost — written to

raw/tasks/

Outcome

A production-ready, local-first Slack agent. The architecture cleanly separates the stateless cloud layer (OAuth, routing, WebSocket bridging) from the stateful local layer (AI execution, memory, UI) — keeping the cloud component cheap and auditable, while giving the local runtime full access to the user's context without any of it leaving the device.